Recently I got myself into position where I lost a VMFS partition containing some vital lab VMs. Unfortunately, this was during a quick migration, where the words “what’s the worst that can happen?” were used.

Recently I got myself into position where I lost a VMFS partition containing some vital lab VMs. Unfortunately, this was during a quick migration, where the words “what’s the worst that can happen?” were used.

Unfortunately the worst was data loss, and this is what happened.

Please note: this is another example of if you do daft things, don’t be surprised when it comes back to bite you. If you do this in a lab, shame on you. If you do it in production, shame on your boss for not firing you…

The aim

Despite a successful trial project using EMC ScaleIO, I decided to migrate my data back to VSAN. Whilst ScaleIO worked admirably (certainly for the cost), it couldn’t match VSAN in terms of performance and manageability.

As I just needed to rebuild the software-defined storage and not each host, I powered off and migrated most of my lab VMs to a large SATA drive (not clever). Each host had a 120GB boot SSD installed, so I created a VMFS partition on each using the remaining free space (approx. 112GB) and moved a handful of essential VMs (domain controller, virtual firewall, vCenter) to each one. As this was only a temporary move, what’s the worst that could happen?

With the ScaleIO datastores empty I decommissioned the installation, put each host into maintenance mode and shut it down.

Here be dragons…

Unfortunately after a reboot, the first host would not mount the VMFS partition. No amount of vmkfstools -V would mount the datastore, and running partedUtil verified the partition table was fine. It also listed a VMFS partition was there, it just refused to mount it.

I checked the VMFS partition using VOMA, specifically:

voma -m vmfs -f check -d /vmfs/devices/disks/naa.600507630080819ba000000000000000:9

Unfortunately all I got back was a list of stale locks. I tried breaking the locks (which worked), but still left me with a hosed system. Finally I called VMware support, and after a few hours the partition was pronounced dead. Time of death…. when I shut the host down.

I’d lost entire partition tables before, so I couldn’t understand how that could be intact but the VMFS partition was corrupt… and therefore nothing I could do.

So I got to work.

Recovering the data

Boot into your favourite Linux distro of choice. For quick and easy stuff like this I pxe-boot Ubuntu direct from my LAN, but use RHEL/CentOS for most lab work. For this demo I’ll assume you’re using the former. The following assumes:

- You’re using Ubuntu

- It’s an x86_64 version

- root privileges

Using a terminal, download the vmfs-tools package from a mirror (substitute _i386.deb for non 64-bit systems):

wget http://mirrors.kernel.org/ubuntu/pool/universe/v/vmfs-tools/vmfs-tools_0.2.5-1_amd64.deb

If you use apt-get to install a repo version, it’ll be an outdated one that is only good for reading VMFS 3 partitions. And that doesn’t happen often these days!

Install the package using (substitute accordingly):

dpkg -i vmfs-tools_0.2.5-1_amd64.deb

Plug your disk into computer. Here I am using a device which converts SATA & IDE to USB:

image courtesy of me doing dumb sh**

Keep an eye on the syslog and note the disk label:

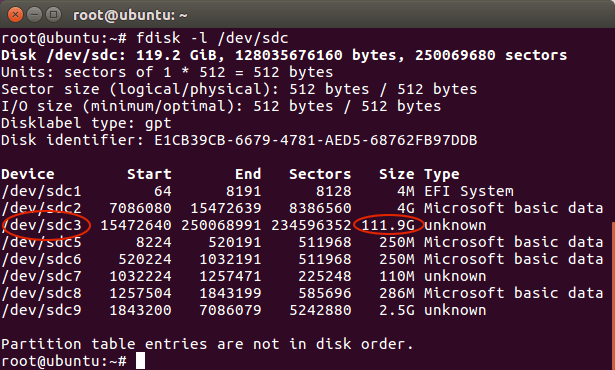

View the partition table of the disk using:

fdisk -l /dev/sdc

In the following example, we see two “unknown” partitions, however we know the one we want is approximately 112GB and therefore /dev/sdc3:

Create a mount point for the files we wish to recover:

mkdir -p /mnt/vmfs

Mount the damaged partition:

vmfs-fuse /dev/sdc3 /mnt/vmfs

You can now list the contents of of the partition:

All done

I was extremely pleased to get that data back, despite being told by categorically by VMware Support it was lost.

Now I have invested in a Synology DS1515+ I doubt I’ll be making the same mistake again:

By the way, if you’re in the vExpert Slack, don’t even start…. 😉

Oooh lucky! Tough read.

Interesting that you’re moving back to VSAN. Have you seen the new very small Supermicro SYS-E200-8D servers that have just come out? They’re amazing and have me interested in VSAN again.

This little SYS-E200-8D server only take two drives (one M2 and one 2.5″ SATA) so you could use it as a VSAN server (M2 for cache and 2.5″ SATA for capacity).

Realistically (in a lab) how many VMs do you think you could run on a VSAN node with a single SSD disk? Is 10 VMs too many?

LikeLike

I have. I’m not sure about how I feel about them. The E300 hosts look a lot like my current hosts.

If you get an E200 and use the M2 for cache and the 2.5 for capacity… where are you gonna install ESXi? If there’s no internal USB drives then it’ll mean an external stick… :-S

Regarding the number of VMs… I guess “it depends”. A Windows Server 2008 R2 DC is going to need a lot more resource than a SQL 2016 box that’s getting hammered… 😉

-Mark

LikeLike

I still have a couple more months of researching my options so I’m going to “wait and see” before deciding! Nice that we have all these options. For the E200 I was just going to use an external bootable USB drive for ESXi. Been doing that for a year now on my current server with no issues.

I am concerned about the limits the E200 has like only two hard drives. The fan noise is a concern too. I like the SYS-5028D-TN4T more but they are more expensive (but are quieter and have up to 8 drives!).

With the SYS-5028D-TN4T you could setup two disk groups in VSAN with all those disks…hmmmmmm IOPS!

Its amazing to think that 8 of the E200 servers would give you a cluster with 1TB of RAM in it….

LikeLike

Pingback: Newsletter: August 6, 2016 | Notes from MWhite

Great article, simple and well explained

I have tried with a datastore suddenly disappeared in ESXI 5, without having had problems in the raid.

I was able to mount without problems from another partition / LUN, but the one I need returns this error:

VMFS: Warning: Lun ID mismatch on /dev/sdd1

VMFS: Unable to read FS information

Unable to open filesystem

LikeLike

I was dumb enough to delete a production VM… I panicked so instantly (forced-off) all running VM’s so I can prevent deleted VM from being overwritten… Now is there a way to UNDELETE the VM…

My (datastore-01) in on local-ata RAID10…

I am boot to boot a Linux-Live-DVD; and undelete VM on the VMFS5… is that even possible???

LikeLike

So, some time has passed since this article and it no longer works with VMFS 6. There is a package, vmfs6-tools, that will allow the same thing. Additionally, the dependencies of the vmfs6 package requires Ubuntu 19.x. The command references also are now vmfs6xxxx (i.e. vmfs6-fuse). Thanks for a great start to get the data back from an SSD drive that developed bad sectors and would PSOD the ESX host on access.

LikeLike

Holy sh*t it worked. You made my day.

LikeLike