Recently I’ve been using vCloud Air a lot, for both proof-of-concept and spinning up my own lab machines. But for some reason I just don’t gel with the UI. It’s not particularly bad, I just don’t find it very intuitive. So I set off in search of another tool, and found the VCA-CLI. Continue reading

Recently I’ve been using vCloud Air a lot, for both proof-of-concept and spinning up my own lab machines. But for some reason I just don’t gel with the UI. It’s not particularly bad, I just don’t find it very intuitive. So I set off in search of another tool, and found the VCA-CLI. Continue reading

vCloud Air

Creating a VPN between a Cisco ASA and vCloud Air

In preparation for an upcoming project with my resident Code Monkey, I decided it was time to link the lab to my vCloud Air instance using a VPN. However as GUI access to the firewalls are disabled in the lab, the on-premises configuration will have to be done using the CLI. Continue reading

In preparation for an upcoming project with my resident Code Monkey, I decided it was time to link the lab to my vCloud Air instance using a VPN. However as GUI access to the firewalls are disabled in the lab, the on-premises configuration will have to be done using the CLI. Continue reading

Using vCloud Air to stand-up a Chef Compliance Proof-of-Concept

The other day I wanted to start playing with Chef, as it’s a tool our company is about to use a lot, and it’s one area I’m quite behind the curve on. Unfortunately the lab was maxed out for resources, so I decided to turn to vCloud Air to rapidly provision an environment. Continue reading

The other day I wanted to start playing with Chef, as it’s a tool our company is about to use a lot, and it’s one area I’m quite behind the curve on. Unfortunately the lab was maxed out for resources, so I decided to turn to vCloud Air to rapidly provision an environment. Continue reading

Wednesday Tidbit: Connecting vCenter to vCloud Air

Recently I signed up with VMware vCloud Air, as I want to use it as an endpoint for machines provisioned in the lab by vRealize Automation. Before I could get to that, I need to link my vCenter to vCA, which turned out to be trickier then I first imagined… Continue reading

Recently I signed up with VMware vCloud Air, as I want to use it as an endpoint for machines provisioned in the lab by vRealize Automation. Before I could get to that, I need to link my vCenter to vCA, which turned out to be trickier then I first imagined… Continue reading

Man down… when you need to DR your DR

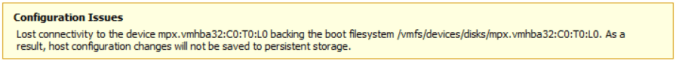

Last week I received the following error on my DR ESXi host:

The host in question is a Dell PowerEdge R710, and this indicated a hardware failure of some sort.

mpx.vmhba32:C0:T0:L0 is an 8GB SanDisk SD card containing the ESXi 5.5 boot partitions. With this gone, it meant the host would still live, but any changes wouldn’t be saved. It was also unlikely that the host would boot up again.

Sure enough this turned out to be the case. Three times out of four the server would hang at the BIOS. To remedy this I disconnected the SD card reader and ordered a replacement.

Unfortunately this turned out to be a red herring, as the new SD card reader failed to be recognised, as did the internal USB stick. After further testing, I decided it was the control panel (a daughterboard that the SD card reader, internal USB and console port plug into) that was faulty. After replacing that, the server booted normally into ESXi.

The total downtime was about ten days, which meant when the remote vCenter and vSphere Replication servers came back up there was a lot of data which needed to be replicated. This subsequently hammered my web connection, so much so that I couldn’t even bring up the vCenter Web Client to monitor how the replication was going.

To find out I needed to switch to the command line.

On the source host I used the following command to get the list of VMs and their associated VM IDs:

vim-cmd vmsvc/getallvms

This give an output similar to:

With each VM ID number, I then used the following command to get status of each replication:

vim-cmd hbrsvc/vmreplica.getState vmid

That told me exactly how much had replicated and how much was left to do. With a total of thirteen VMs to replicate, that was going to take a while!

The BusyBox shell on ESXi is quite limited, but a loop such as:

for i in `seq 38 43`; do vim-cmd hbrsvc/vmreplica.getState $i; done | grep DiskID

Gives me a basic overview on how my mail replications are going:

In the long-run this hasn’t caused too much of an issue, however it has made me think about moving my DR needs to vCloud Air…