In September 2015 VMware released version 6.2 of their Virtual Desktop Infrastructure (VDI) offering, Horizon View. View enables enterprises to virtualise their desktop estate, potentially saving thousands in hardware costs in the process. Other benefits, such as greater flexibility are also realised.

In September 2015 VMware released version 6.2 of their Virtual Desktop Infrastructure (VDI) offering, Horizon View. View enables enterprises to virtualise their desktop estate, potentially saving thousands in hardware costs in the process. Other benefits, such as greater flexibility are also realised.

Unfortunately, implementing a VDI project is no easy task. The process can be quite complicated, and a number of key decisions need to be made.

In this short series I will outline the path to providing a secure, reliable and highly available virtual desktop infrastructure. In this post I will outline the design for the series, but will not list every design decision in detail.

Please note: the following is merely a fraction of what goes into a design document and does not reflect a full design in any way, shape or form (the original document is 120 pages).

Other posts in this series:

- Design

- Installing the Connection Servers and Composer

- Creating the templates

- Configuring the RDS hosts

- Pool configuration

- Application farm configuration

- Load-balancing

- Remote access

The following design is purely hypothetical in nature, but is fairly typical of a Horizon View deployment. As with previous blog articles, the solution has been implemented in a lab beforehand, therefore any names/IP addresses are fictitious and do not represent a production environment.

The vision

To reduce desktop hardware costs by implementing a virtualised desktop solution. This must support the latest Microsoft operating system, and be accessible both internally and externally.

Current state analysis

Currently there is no desktop virtualisation solution in place. All users are assigned PCs for accessing the corporate network. Remote users have company laptops which are expensive. Despite the cost of PCs falling year-on-year, the company would like to further reduce the costs of end-user computing.

Users have expressed a desire to access the corporate network from home. However due to a lack of any Network Access Protection, the company security policy forbids this. Management do not trust the state of remote workers home PCs, and therefore do not allow access.

Desktop operating system deployment is slow and laborious. The IT department have struggled with previous deployments which has led to a decline in internal customer satisfaction.

VMware vSphere is currently utilised within the company for virtualising server workloads. Due to a miscalculation with a previous consultant, current usage stands at approximately 20%.

Requirements

Requirements are key demands on the design which should be met. The following requirements have been specified:

Constraints

Constraints are decisions which have already been made which limit the design choices available. The following constraints have been identified:

Risks

Risks are specific events which may prevent the successful deployment of the solution. The following risks have been noted:

Assumptions

Together with the above, the project team has assumed the following is in place:

Conceptual design

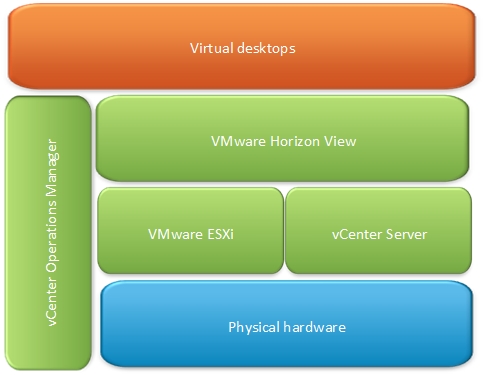

The solution will utilise the existing VMware architecture. VMware Horizon View will be deployed on top of VMware vCenter. vCenter Operations Manager will be employed to monitor the health of the solution.

Please note: the above does not truly reflect a conceptional solution as physical attributes (“VMware ESXi”, “VMware Horizon View”) are listed. However I think it’s fair to say that this series will be based around providing a virtual desktop infrastructure based on VMware View.

Logical design

The logical design captures decisions at a high level, but is sufficiently detail agnostic to prevent you locking yourself into any given situation. If done correctly, this gives you the ability to present your design choices to a different vendor at a later date and they can still present you with a solution.

Example logical design choices

- A separate virtualisation infrastructure management platform will be used to separate virtual desktops from the virtual server infrastructure

- High Availability will be used to ensure that desktops are protected in the event of host failure

- Technology will be employed across the cluster to dynamically balance resources, optimising CPU, memory and storage

- To ease administration, a centralised logical network switch will be created at the cluster level

- The networking solution will be configured to dynamically manage all types of virtual machine traffic

- Existing block based storage will be utilised

- A solution will be implemented to automatically distribute storage resources across datastores

- Virtual desktops will be segregated into pools, with each set of users assigned to the appropriate one

Physical design

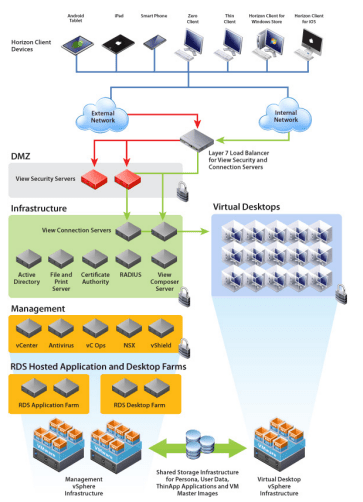

The design will incorporate two vSphere clusters – one for management services (Active Directory, DNS, View Connection Servers etc) and another for the virtual desktops. The management cluster is already in place.

Image courtesy of VMware

The management cluster will consist of three ESXi hosts, while the VDI cluster will consist of six hosts (C1). Host sizing will be:

- Dual socket, 8 cores, 3.0GHz processor

- 256GB memory

- SD storage

- 2 x 1GB onboard NIC

- 6 x 1GB NICs

The VDI cluster will be configured with vSphere HA to ensure virtual desktops are restarted on another host in the event of host failure (R2).

vSphere DRS will be used in fully automated mode to dynamically balance CPU and memory resources across the cluster.

To simplify management and provide increased functionality, Distributed Virtual Switching will be configured. This will enable functionality such as Network I/O Control, load-based teaming and the ability to manage egress traffic bandwidth.

To load-balance virtual machine traffic, the Route based on physical NIC load policy will be used.

In line with the existing constraints the existing IBM fibre channel storage architecture will be used (C2, C4). This will include all fabric and use of the XIV Gen3 SAN array.

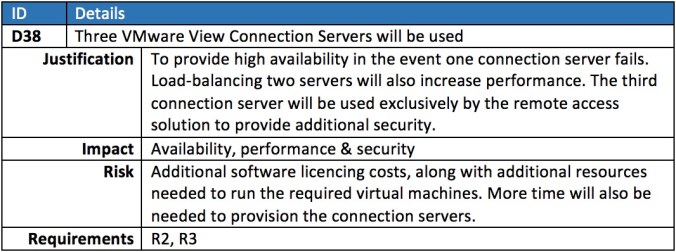

Three View Connection Servers will be used, two of which will be load balanced by the existing F5 hardware (R2, C3). The third will be used exclusively to provide remote access to virtual desktops (R3).

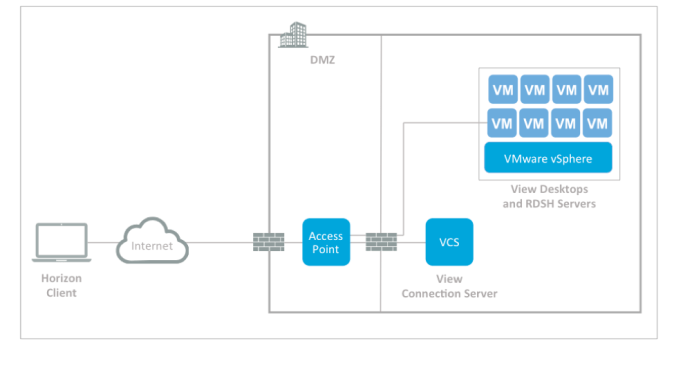

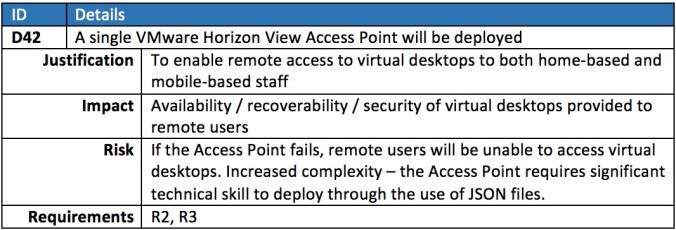

At the time of writing, the current version of Horizon View is 6.2.1. Therefore we will deploy an Access Point in the DMZ instead of a Security Server.

The solution will consist of the following:

Image courtesy of VMware

As there is no extra money in the budget for additional load-balancers (C4), only a single access point will be used (R3). This however contravenes the requirement to avoid single points of failure (R2), and must be added to the risk register.

All pools will run Windows 10 (R1). A number of View Pools will be configured to meet the functional requirements. One pool will consist of dedicated desktops for members of the Board, developers and IT operations staff (R4).

The following pools will be defined:

Virtual machine sizing has been configured as follows:

To satisfy the compute requirements, five hosts will be needed. However to allow for N+1 redundancy, six servers will be provisioned.

All templates and “Golden Master” images will be optimised according to best practices.

A floating pool will consist of linked-clones for call centre staff (R5), and each desktop will be refreshed during logoff (R6).

All pools will be automatically provisioned (R7) to reduce the burden on Operational staff.

Lastly, vRealize Operations Manager will be used utilised to monitor the health of the environment.

Coming up

In part 2 we begin the implementation of the design by installing and configuring the VMware View Connection Servers.

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 2: Installing the Connection Servers and Composer | virtualHobbit

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 3: Creating the templates | virtualHobbit

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 4: Configuring the RDS hosts | virtualHobbit

Pingback: Could Dell’s new converged VRTX be a game changer? | virtualHobbit

Pingback: I’ll be speaking at the Scottish VMUG on Thursday 21 April | virtualHobbit

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 6: Application farm configuration | virtualhobbit

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 7: Load-balancing | virtualhobbit

Pingback: Implementing a VMware Virtual Desktop Infrastructure with Horizon View 6.2 – Part 8: Remote access | virtualhobbit

Pingback: Check out the VMware Horizon View Toolbox 2 fling | virtualhobbit

Pingback: Deploy VMware View Access Point the easy way | virtualhobbit

Pingback: Wednesday Tidbit: Make sure your Certificate Authority signature algorithm is valid for vCenter certificates | virtualhobbit

Pingback: Wednesday Tidbit: F5 basics – creating an outbound virtual server | virtualhobbit